AI is transforming healthcare through a range of machine learning techniques, including supervised, unsupervised, and reinforcement learning, for outcome prediction and pattern recognition. Deep learning methods such as CNNs, RNNs, and transformers enable advanced analysis of medical imaging and health data. While computer vision aids diagnostic processes, challenges persist in data privacy, high-quality data collection, and the clinical validation of AI solutions. This blog from PIT Solutions explores AI’s transformative role in healthcare, emphasizing its significance, key applications, integration with AI & Data Science, challenges, and its future potential in AI app development.

Technical Applications of AI in Healthcare

- Medical Imaging and Diagnostics: AI has revolutionized the field of medical imaging and diagnostics, with Convolutional Neural Networks (CNNs) emerging as a powerful tool for analyzing medical images. These algorithms can process a wide range of complex medical images, including computed tomography (CT) scans, X-rays, and magnetic resonance imaging (MRI). These innovations have enabled rapid and accurate detection of abnormalities, such as tumors in radiological examinations or early indications of eye diseases in retinal images. The impact of these systems extends across various medical specialties, including radiology, pathology, cardiology and beyond.

- Predictive Analytics for Disease Forecasting: AI-powered predictive models analyze historical patient data to forecast disease progression and likely responses to treatment.

- Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) models: RNNs are powerful for healthcare time-series analysis, particularly in predicting conditions like sepsis, heart failure, and hospital readmissions. Their unique architecture allows them to process sequential data by remembering previous inputs. While standard RNNs have limitations with long-term memory, advanced variants like LSTMs overcome this by effectively handling extended sequences in medical predictions.

- Gradient Boosting Machines (GBMs) and Random Forests: GBMs and Random Forests are key tools in medical risk assessment, particularly for cardiovascular diseases and cancer. While GBMs build trees sequentially to correct previous errors, Random Forests create independent trees using random data samples. Random Forests generally handle noisy clinical data better, whereas GBMs are more prone to overfitting.

- Drug Discovery and Genomics: Machine Learning algorithms analyze vast datasets like genomic data connected to a disease, detect potential drug targets, predict drug’s efficacy and its potential side effects. Techniques include:

- Generative Adversarial Networks (GANs): GANs revolutionize drug discovery through molecular design and single-cell data analysis. Using a two-part architecture of generator and discriminator networks, GANs create new molecular structures with desired properties. This accelerates drug development by efficiently exploring chemical possibilities beyond traditional methods' capabilities.

- Graph Neural Networks (GNNs): GNNs excel in drug discovery by effectively modeling protein-ligand interactions and molecular structures. Combined with CNNs for gene expression analysis, they provide accurate drug response predictions. Their ability to understand complex biological relationships makes them valuable for pharmaceutical research and therapeutic development.

- CRISPR-Based AI Models: Machine learning models like DeepCRISPR and CRISPR-M analyze genomic sequences to design optimal guide RNAs (gRNAs) that enhance targeting accuracy. These AI-driven tools reduce unintended edits, thereby increasing the safety and efficacy of CRISPR-based interventions. Deep learning models can accurately predict the activity and specificity of different Cas9 variants, allowing for the selection of the most suitable enzyme for a particular application.

“Artificial intelligence will not replace doctors, but doctors who use AI will replace those who do not.” — Bertalan Meskó

Open-Source AI Models in Healthcare

- Meditron: The Meditron family comprises open-source medical large language models (LLMs), including Meditron-7B and Meditron-70B. Trained on medical literature and clinical guidelines, these models demonstrate improved performance across a variety of medical reasoning tasks. GitHub link: https://github.com/epfLLM/meditron

- BioMistral-7B: BioMistral-7B is an open-source LLM tailored for the biomedical domain. It is built upon the Mistral foundation model and pre-trained on data available from PubMed Central. HuggingFace link: BioMistral/BioMistral-7B · Hugging Face

- Med42-70B: Med42-70B is a publicly accessible clinical LLM developed by M42 Health. Based on LLaMA-2 and featuring 70 billion parameters, it is designed to deliver high-quality responses to a wide range of medical questions. HuggingFace link: m42-health/med42-70b · Hugging Face

Challenges and Ethical Considerations

- Data Privacy and Security

- Homomorphic Encryption: Homomorphic Encryption (HE) is notable for its unique ability to allow computations on encrypted data. This means that data can remain encrypted, even during processing, ensuring its confidentiality. With HE, hospitals and research institutions can collaboratively analyze encrypted patient data, deriving insights without compromising individual privacy.

- Differential Privacy: Differential privacy is a mathematical framework that provides strong privacy assurances when analyzing and sharing data. The goal of differential privacy is to enable meaningful data analysis and insights for research, innovation, and other applications, while mathematically guaranteeing that any individual's presence or absence in the dataset cannot be conclusively identified.

- Algorithmic Bias and Model Interpretability: AI models must be trained on diverse datasets to prevent biases in medical decision-making. Solutions include:

- Explainable AI (XAI): Explainable AI (XAI) techniques aim to make AI systems' decisions more transparent and interpretable, but they do not inherently give AI systems the ability to explain their own actions or predict their future behavior. XAI includes various methods and tools, among which are:

- SHAP (SHapley Additive exPlanations): A method based on game theory principles that assigns importance values to different features to explain individual predictions of machine learning models.

- LIME (Local Interpretable Model-agnostic Explanations): A technique that explains individual predictions by creating simplified, interpretable models that approximate the original model's behavior in the locality of a specific prediction.

- Bias Mitigation Algorithms: AI fairness requires addressing two primary sources of bias: model design and training data. Ensuring equitable AI-driven decisions involves data pre-processing techniques that transform, clean, and balance datasets to minimize discriminatory patterns. Additionally, fairness-aware algorithms incorporate specific rules and constraints that guide AI systems to produce outcomes that are equitable across all individuals and demographic groups.

- Diversity in AI Training Data: Reducing model disparities requires comprehensive data strategies. Transforming unstructured information into usable formats significantly expands the pool of available internal data. When necessary, creating synthetic data can supplement existing datasets to address gaps and improve model performance.

- Explainable AI (XAI): Explainable AI (XAI) techniques aim to make AI systems' decisions more transparent and interpretable, but they do not inherently give AI systems the ability to explain their own actions or predict their future behavior. XAI includes various methods and tools, among which are:

- Regulatory and Compliance Challenges: AI applications in healthcare must adhere to stringent regulatory frameworks to ensure safety and effectiveness. Compliance frameworks include:

- Good Machine Learning Practice (GMLP): Generative AI represents a significant advancement in medical field, emphasizing the critical need to clearly define product’s intended purposes and regulatory classifications. These products require special considerations due to their iterative, data-dependent development processes. As this field continues to advance, GMLP standards and industry consensus must evolve alongside it to ensure proper oversight and safety.

- Health Insurance Portability and Accountability Act (HIPAA): As AI's healthcare presence grows, HIPAA compliance becomes critical. This U.S. legislation protects electronic health information confidentiality and integrity. While AI systems need extensive data for training, ensuring proper de-identification of sensitive health information while maintaining data utility presents significant challenges. Healthcare organizations must therefore maintain vigilance and collaborate closely with AI developers to ensure all applications satisfy HIPAA requirements.

The Future of AI in Healthcare

AI is poised to play an even greater role in healthcare, with advancements in robotics, deep learning, and bioinformatics paving the way for innovative solutions. The future of AI in healthcare includes:

- AI-Augmented Robotic Surgery

- Reinforcement Learning-Based Robotic Assistants: AI and ML advancements are enhancing surgical robotics through sensor data and imaging technology integration. This combination of AI and robotics aims to elevate surgical precision, patient outcomes, and operating room safety.

- Computer Vision for Surgical Navigation: AI-enhanced intraoperative imaging provides surgeons with real-time anatomical visualization, offering guidance during procedures. This computer vision technology creates new opportunities to analyze and improve surgical techniques at scale. As these applications continue to evolve in surgical settings, broader societal involvement becomes crucial to ensure safe, effective implementation that truly benefits surgical patients.

- Quantum Computing in AI Healthcare Applications

- Quantum ML for Drug Discovery: Leverages the power of quantum computing to accelerate and enhance the drug development process, offering potential for more accurate and efficient drug design and discovery.

- Quantum-Assisted Medical Imaging: Quantum computing offers powerful parallel processing capabilities for medical image analysis, while its quantum properties help overcome optimization challenges in segmentation and classification tasks.

Conclusion

AI in healthcare is redefining how diagnosis, treatment, and patient care are approached. Through AI & Data Science, healthcare is becoming more targeted, efficient, and accessible. The integration of AI app development, ethical practices, and robust regulatory compliance ensures sustainable adoption. By embracing responsible AI practices, the future of healthcare will be defined by precision medicine, real-time disease monitoring, and intelligent automation - ultimately improving patient outcomes and accelerating medical research innovation. At PIT Solutions, we are committed to building cutting-edge AI-powered healthcare solutions that drive innovation while prioritizing privacy, compliance, and ethical AI use.

Ready to Leverage AI in Healthcare?

Partner with PIT Solutions to build intelligent, secure, and scalable AI applications tailored for the healthcare industry. Contact us today to transform your vision to life through impactful digital solutions.

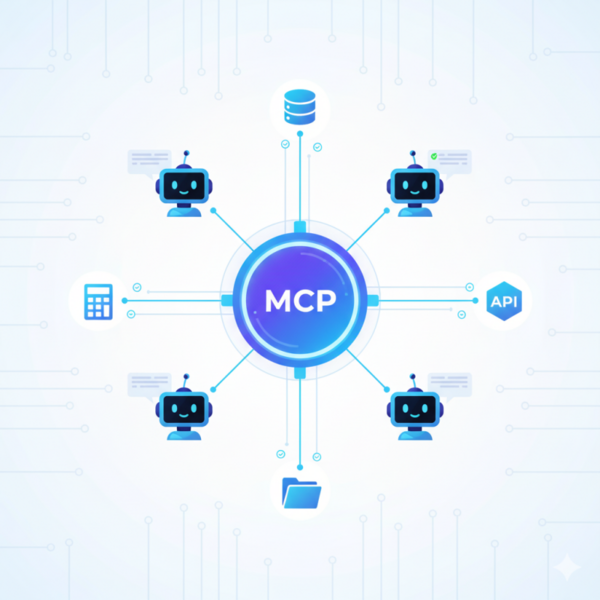

Explore how MCP enables scalable AI collaboration and agent interoperability.

Challenges in Multi-Agent Coordination

Large-scale AI often involves many specialized agents working in parallel. While this boosts overall capability, it also challenges traditional single-agent paradigms. Each agent usually has a limited context window, which means important information may be lost between turns. For example, a lead agent might plan a task and delegate it to other agents, but if its plan isn’t stored outside the model’s context, it may be lost. Integrating the various tools and data sources required by each agent is also often error-prone. Developers regularly encounter issues passing data between agents, as incompatible tools frequently lead to execution or parsing problems. Without a single standard way to share context, crucial data stays siloed, making coordination fragile and costly to scale.

Understanding the Model Context Protocol (MCP)

The Model Context Protocol standardizes how AI agents communicate with external resources and data. Think of MCP as a universal “port” for AI: a common interface that lets any model connect to calculators, databases, APIs, and other services without custom glue code. In essence, MCP gives every agent in a system a shared way to exchange context and call services. Once MCP is in place, each agent can access contextual information (via Resources) and actionable functions (via Tools) through the same protocol. This resolves earlier challenges: tool integrations follow a shared contract instead of bespoke code, and agents become inherently context-aware and interoperable.

MCP standardizes how AI agents communicate with external resources and data. Think of it as a universal “port” for AI, a shared interface that lets any model connect to calculators, databases, APIs, and other services without requiring custom glue code. In essence, MCP gives every agent in a system a shared way to exchange context and call services. Once MCP is in place, each agent can access contextual information (via Resources) and actionable functions (via Tools) through the same protocol. This resolves earlier challenges: tool integrations follow a shared contract instead of bespoke code, and agents become inherently context-aware and interoperable.

Data and Transport Layers in MCP

MCP defines two main protocol layers: the Data Layer and the Transport Layer. The Data Layer is based on JSON-RPC and specifies the message formats that agents use. It covers notifications, context and action primitives, and lifecycle events (such as initialization and capability negotiation). These JSON-RPC messages are carried over the Transport Layer, which could be HTTP (with streaming) for remote servers or STDIN/STDOUT for local communication. Because both client and server speak the same JSON-RPC dialect, they can communicate over sockets or pipes without requiring extra integration code.

See how your AI architecture benefits from MCP integration.

Persistence and Memory in Multi-Agent Systems

MCP makes it easy for agents to use external memory and persistence. Since the protocol is independent of any particular LLM, an MCP server can offer write capabilities or wrap a database/vector store as a resource. In practice, this lets an agent deliberately dump its state outside the short-live discussion. For example, a lead researcher agent can draft a literature review plan and store it in an external memory server via MCP before launching subtasks. Later, even if the agent’s context window has shrunk, it can retrieve the stored plan and continue without losing progress.

After completing tasks, agents can save key information in external memory and summarize finished work phases. Instead of losing progress, they simply start a new agent instance and reload the context from memory when needed. This approach prevents context overflow and maintains continuity in long, complex workflows. In MCP terminology, a “Memory” is just another server: it might offer a readMemory resource and a writeMemory tool. Any connected agent can write to or read from this memory server as needed.

Shared persistence also aids collaboration among agents. Rather than passing large chunks of text directly, agents write intermediate results to a common store. One useful pattern is the “artifact” technique: a sub-agent might save its structured result (for example, a JSON report or file) to a file-system MCP server and return a small reference (or file path) to the lead agent. This greatly reduces token usage and avoids long chains of data being passed between agents. Later, any agent can retrieve these results by calling the resource on the memory server. In MCP, these external stores (databases, files, etc.) are considered first-class context providers.

Notifications play a key role in coordination and memory. MCP servers can broadcast updates to all connected agents whenever their data changes. For instance, a shared database server might alert clients each time a new record is added, prompting agents to reload the pertinent information. In this way, even parallel agents maintain a common understanding of the shared data.

Proof of Concept: Collaborative Research Architecture

Consider a collaborative research use case to illustrate these concepts. Suppose a question like “Summarize the latest findings on AI safety” is sent to a Lead Researcher bot. The lead agent connects to a Memory service, a Document Search service, and a Citation service via MCP. To avoid losing context, the lead agent immediately writes its planned strategy (for example, breaking the topic into subtopics) into the memory store.

Next, the lead agent spawns several specialized sub-agents, each handling a different task (such as “search recent conference papers,” “extract key points,” or “compile citations”). Although they all use the same MCP servers, each sub-agent runs its own MCP client. For example, one “search” sub-agent might use the Document Search server’s API tool to fetch papers, then use a summary tool on those documents. It writes its results back to the memory server as it goes. Meanwhile, a “citation” sub-agent could take the collected information and use a citation-matching tool on the Citation server to find sources for each assertion.

All agents communicate using MCP-defined primitives. They treat the common memory, search APIs, and other services as standardized tools and resources. Sub-agents can notify others when they finish tasks and have new data available. For instance, the Memory server might signal the lead agent that a batch of summaries is ready from one sub-agent. After the lead agent retrieves and integrates these results, it can initiate more subtasks. Throughout this process, no agent needs to know the internal details of another agent or server—every component simply speaks the MCP protocol.

Benefits of MCP-Enabled Multi-Agent Systems

- Context Continuity: Agents share common resources and storage, so they never have to start tasks from scratch.

- Agent Specialization: Each agent can focus on its area of expertise while still collaborating effectively through MCP.

- Decentralized Coordination: Any agent can take on tasks by connecting to the MCP servers, eliminating single points of failure or bottlenecks.

- Interoperability and Extensibility: New data sources and tools can be easily integrated by registering with an MCP server, making the system flexible and future-proof.

- Consistent Tooling and Fewer Errors: A uniform protocol reduces mismatches and execution errors that often arise in ad hoc integrations.

How PIT Solutions Enhances Multi-Agent Collaboration

PIT Solutions leverages the power of MCP to design and implement robust, collaborative multi-agent systems. Our team builds custom MCP-based architectures that allow diverse AI tools to plug into a shared context bus seamlessly. This means your agents can share knowledge and coordinate tasks without losing critical information. We also provide expertise in integrating new data sources and tools into the MCP ecosystem, ensuring your system remains flexible and scalable. By partnering with PIT Solutions, organizations can harness MCP to streamline complex workflows, accelerate innovation, and reduce the engineering effort required for multi-agent coordination. Contact PIT Solutions to integrate MCP into your AI workflows